In the early 2020s, serverless was often dismissed as a tool for “glue code” or simple cron jobs. Fast forward to 2026, and the narrative has shifted entirely. Serverless architecture is now the primary choice for building hyper-scalable AI agents, real-time data streaming pipelines, and global microservices.

But “serverless” is a spectrum, not a single product. To build effectively, you must understand the underlying mechanics, the architectural tradeoffs, and—most importantly—the specific limitations of the platform you choose.

I. The Mechanics: How Serverless Actually Works

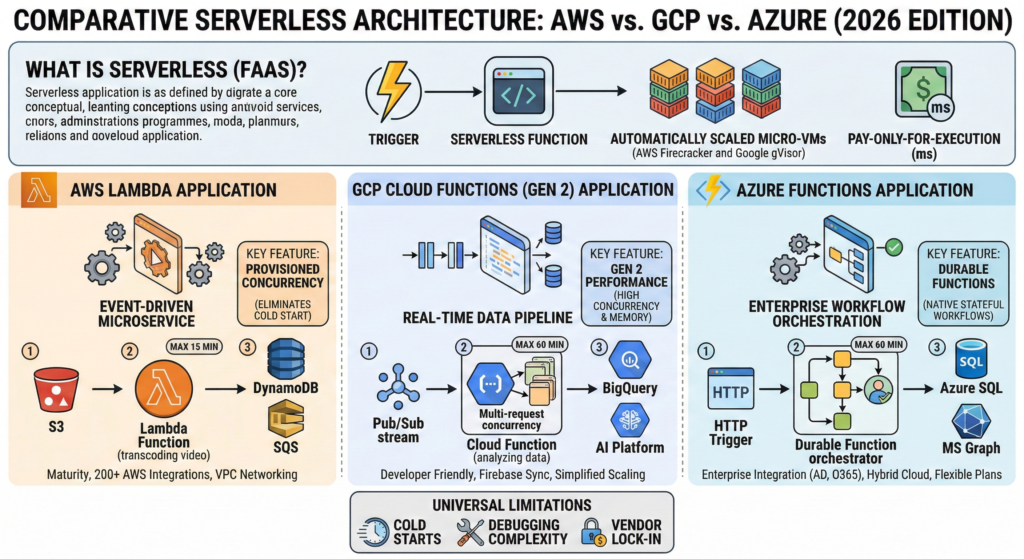

To the developer, serverless feels like magic: you upload code, and it runs. Under the hood, however, it is a sophisticated orchestration of Function-as-a-Service (FaaS) and Backend-as-a-Service (BaaS).

The Lifecycle of a Function Invocation

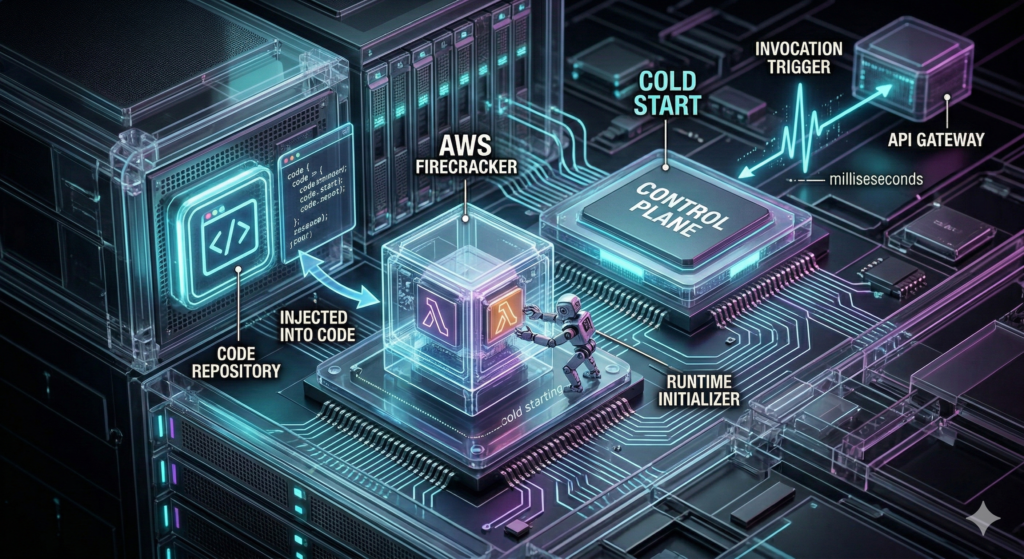

- The Trigger: An event occurs. This could be an HTTP request via an API Gateway, a new file landing in an S3 bucket, or a message appearing in a Kafka stream.

- The Control Plane: The cloud provider’s orchestrator receives the signal. It checks if a “warm” container (one that recently ran your code) is available.

- The Provisioning (Cold Start): If no warm container exists, the provider must “cold start.” It pulls your code package, initializes the runtime (Python, Node.js, Go, etc.), and launches a micro-VM (like AWS Firecracker).

- The Execution: Your function runs. It is stateless by nature—meaning it has no memory of previous runs unless it calls an external database.

- The Reclamation: After execution, the container stays “warm” for a few minutes before being destroyed to save resources.

II. Architectural Patterns: Moving Beyond “Hello World”

Modern serverless architecture relies on three core patterns:

1. Event-Driven Microservices

Instead of one large server, your app is a collection of functions.

- Example: A user signs up (Event) → Function A sends a welcome email → Function B creates a database record → Function C triggers a KYC (Know Your Customer) check.

2. The “Fan-Out” Pattern

Serverless is unparalleled at parallel processing.

- Example: You upload a 1GB video. A single function “fans out” the work to 100 simultaneous functions, each transcoding a 10-second segment. What would take an hour on a single server takes 60 seconds in serverless.

3. Stateful Orchestration

Since functions are stateless, you need a “brain” to manage long-running tasks. This is where AWS Step Functions, Azure Durable Functions, and Google Cloud Workflows come in. They maintain the state of a multi-step process even if the individual functions finish and disappear.

III. The Big Three: Functional Differences & Limitations

While all three providers offer the same basic promise, their internal philosophies—and their limitations—differ significantly.

1. AWS Lambda: The Mature Titan

AWS Lambda is the benchmark for the industry. Its greatest strength is its ecosystem. If you use AWS for storage (S3) or databases (DynamoDB), Lambda is natively integrated.

- Key Functionality: It offers Provisioned Concurrency, allowing you to pay a baseline fee to keep functions “warm,” effectively killing the cold start problem for high-traffic APIs.

- The Limitations:

- Maximum Execution: 15 minutes. If your task takes 15 minutes and 1 second, it will be killed.

- Payload Size: Requests are limited to 6MB (synchronous) or 256KB (asynchronous). You cannot send a large image directly to the function; you must upload it to S3 first.

- Networking: Connecting Lambda to a Private VPC can still introduce slight latency penalties compared to “public” functions.

2. Google Cloud Functions (GCF): The Data Scientist’s Dream

Google’s GCF (now often integrated with Cloud Run Functions) is built for speed and data processing.

- Key Functionality: Google supports concurrency within a single instance. While AWS and Azure typically run one request per container, Google can handle multiple requests in one container, which drastically reduces costs and cold starts for high-volume apps.

- The Limitations:

- Ecosystem Breadth: While GCF is great for Firebase and BigQuery, it has fewer “enterprise” triggers (like legacy ERP connectors) than Azure or AWS.

- Regional Availability: GCP has fewer global regions than AWS, which may matter for ultra-low latency requirements in specific parts of the world.

- Complexity of Gen 2: The shift to “Gen 2” (built on Cloud Run) adds power but also adds configuration complexity regarding underlying container settings.

3. Azure Functions: The Enterprise Architect

Azure doesn’t just offer functions; it offers a “hosting platform” that feels familiar to enterprise developers.

- Key Functionality: Durable Functions. Azure is the only provider that allows you to write “stateful” workflows directly in code (C#, Python, JS) rather than using a visual drag-and-drop tool like AWS Step Functions. This is a game-changer for complex business logic.

- The Limitations:

- Cold Start (Consumption Plan): Historically, Azure’s free/cheap “Consumption Plan” has higher cold start latency than AWS. To fix this, you almost always have to upgrade to the “Premium Plan.”

- Portal Complexity: The Azure Portal is notoriously dense. Finding the right setting for your function can feel like navigating a maze compared to Google’s streamlined UI.

- Language Latency: While .NET is lightning fast, Python and Java performance on Azure have historically lagged slightly behind AWS.

IV. Critical Comparison Table (2026 Standards)

| Feature | AWS Lambda | Google Cloud Functions (Gen 2) | Azure Functions |

| Max Execution Time | 15 Minutes | 60 Minutes | 60 Minutes (Premium) |

| Max Memory | 10 GB | 16 GB | 14 GB |

| Scaling Speed | Instant (Burst of 1000s) | Fast (Instance-based) | Adaptive (Slower on Consumption) |

| Concurrency | 1 Request / Instance | Multiple Requests / Instance | Multiple Requests / Instance |

| State Management | Step Functions (Visual) | Workflows (YAML) | Durable Functions (Code) |

| Networking | VPC-Heavy | Simplified Shared VPC | Virtual Network (VNet) |

V. The “Hidden” Limitations of Serverless

Regardless of the provider, there are four “universal truths” that can break a serverless project if not planned for:

1. The Cold Start Reality

If your app is used sporadically, the first user will always wait.

- Solution: Use “Warmup” plugins or pay for “Always Ready” instances (AWS Provisioned Concurrency or Azure Premium).

2. The “Noisy Neighbor” Effect

In a serverless environment, you share underlying hardware with other companies. While cloud providers use “Sandboxing” (like GCP’s gVisor or AWS Firecracker) to isolate you, you may still see slight fluctuations in CPU performance during peak global traffic.

3. Debugging and Observability

You cannot SSH into a serverless function. If it fails, you are entirely dependent on logs.

- Strategic Shift: You must invest in Distributed Tracing (AWS X-Ray, Azure Monitor, or Datadog) to see how an event moves through ten different functions.

4. Vendor Lock-In

Serverless is the ultimate “Cloud Trap.” Because your code is so tightly integrated with the provider’s triggers (e.g., S3 events), moving from AWS to Azure often requires a 40–60% rewrite of your infrastructure code.

VI. Decision Matrix: Which One Should You Build On?

- Choose AWS Lambda if: You are building a massive, high-concurrency application and need the most reliable, “battle-tested” platform with a vast array of third-party tools.

- Choose Google Cloud Functions if: You are a startup or a data-heavy team. If your app processes millions of events from BigQuery or uses Firebase for mobile, GCP is the fastest path to production.

- Choose Azure Functions if: You are an enterprise using the .NET stack. If you need to automate complex workflows (like an insurance claim process) that require human-in-the-loop steps, Durable Functions make Azure the clear winner.

Final Thoughts

In 2026, the question is no longer “Should we use serverless?” but “Which serverless flavor fits our architecture?” By understanding the 15-minute limit of Lambda, the concurrency of Google, and the stateful power of Azure, you can build systems that don’t just scale—they scale intelligently.

Leave a Reply