The AI industry has long believed that bigger models mean better intelligence. Companies have been racing to build models with hundreds of billions—or even trillions—of parameters.

But Alibaba’s Qwen3.5-9B is challenging that idea.

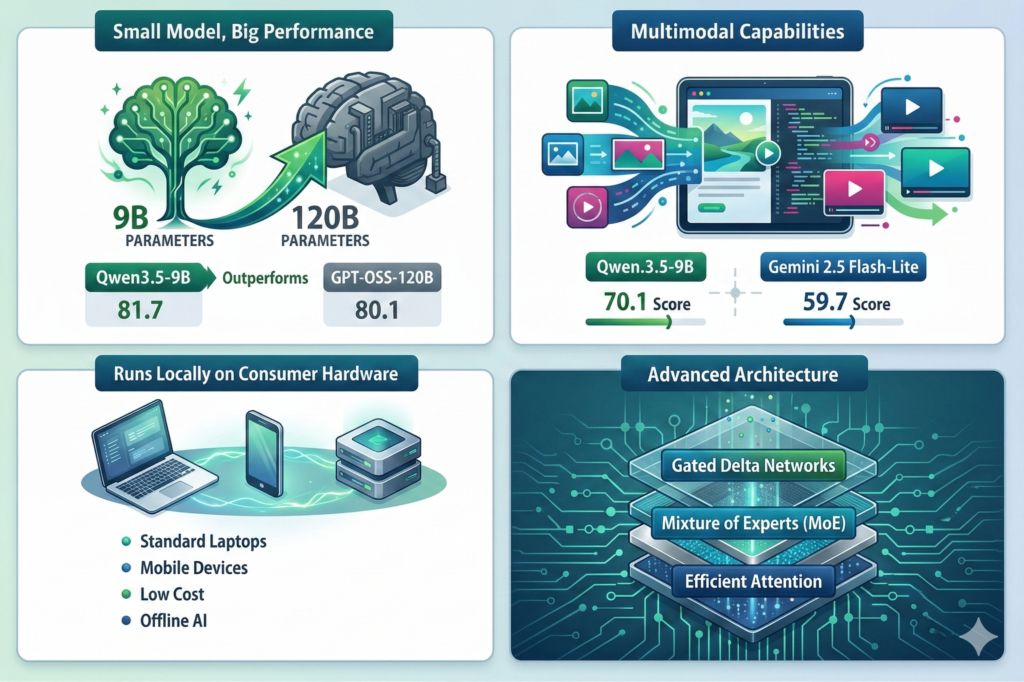

Despite having only 9 billion parameters, the model reportedly competes with or even outperforms much larger systems such as GPT-OSS-120B on several benchmarks. Even more interesting: it can run locally on consumer hardware like laptops or small GPU servers.

This marks an important shift in AI development—efficiency is starting to beat raw scale.

What Is the Qwen Model Family?

Qwen (short for Tongyi Qianwen) is a family of large language models developed by Alibaba Cloud.

The ecosystem includes multiple models designed for different environments—from edge devices to large data centers.

Qwen Model Series

| Model | Parameters | Target Deployment |

|---|---|---|

| Qwen3.5-0.8B | 0.8B | Edge devices & mobile |

| Qwen3.5-2B | 2B | Lightweight assistants |

| Qwen3.5-4B | 4B | Local AI applications |

| Qwen3.5-9B | 9B | Advanced reasoning & multimodal tasks |

The models are released with open weights, meaning developers can download them and run them locally using tools such as:

- Ollama

- vLLM

- llama.cpp

- Hugging Face Transformers

This makes them ideal for self-hosted AI systems.

Why Qwen3.5-9B Is a Big Deal

1. Small Model, Huge Performance

The most surprising aspect of Qwen3.5-9B is its performance relative to its size.

For example, on the GPQA Diamond benchmark (a difficult reasoning test):

| Model | Score |

|---|---|

| Qwen3.5-9B | 81.7 |

| GPT-OSS-120B | 80.1 |

Despite being over 10× smaller, Qwen3.5-9B achieves slightly better performance.

This highlights a new concept in AI research:

Intelligence density — how much capability a model achieves per parameter.

2. Multimodal Intelligence

Qwen3.5-9B isn’t just a text model.

It supports multimodal reasoning, meaning it can process:

- text

- images

- visual content

- structured data

In the MMMU-Pro visual reasoning benchmark, the model achieved:

| Model | Score |

|---|---|

| Qwen3.5-9B | 70.1 |

| Gemini Flash-Lite | 59.7 |

This makes the model suitable for applications like:

- document analysis

- image-based reasoning

- visual assistants

- AI agents interacting with UI elements

3. Runs Locally on Consumer Hardware

One of the most exciting aspects of Qwen3.5-9B is its hardware efficiency.

Unlike large models that require massive GPU clusters, Qwen can run on:

- gaming GPUs

- local servers

- laptops

- edge devices

Typical deployment requirements:

| Hardware | Capability |

|---|---|

| CPU | Basic inference |

| 16-24GB GPU | Fast local inference |

| Laptop with quantization | Lightweight tasks |

This dramatically reduces infrastructure cost for AI deployment.

Qwen vs GPT Models Comparison

To understand why Qwen3.5-9B is interesting, we need to compare it with models from OpenAI.

Model Size Comparison

| Model | Organization | Parameters | Open Source | Local Deployment |

|---|---|---|---|---|

| Qwen3.5-9B | Alibaba | 9B | Yes | Yes |

| GPT-OSS-120B | OpenAI | 120B | Partial | Difficult |

| GPT-3.5 | OpenAI | ~175B | No | No |

| GPT-4 | OpenAI | Estimated 1T+ | No | No |

| GPT-4o | OpenAI | Unknown | No | No |

The key takeaway:

Qwen achieves competitive performance with dramatically fewer parameters.

Benchmark Comparison

| Benchmark | Qwen3.5-9B | GPT-OSS-120B | GPT-4 |

|---|---|---|---|

| GPQA Diamond | 81.7 | 80.1 | ~83 |

| MMMU-Pro | 70.1 | 65 | ~72 |

| Code Generation | Strong | Very Strong | Best |

| Multimodal | Yes | Limited | Yes |

Deployment Architecture Comparison

One of the biggest differences between Qwen and GPT models is deployment architecture.

OpenAI GPT Architecture

User

│

Application

│

OpenAI API

│

Massive GPU Cluster

│

Model Inference

│

Response

Characteristics:

- Fully managed cloud

- No infrastructure control

- Token-based pricing

Qwen Local Deployment

User Application

│

API Gateway

│

Local LLM Server

(vLLM / Ollama / llama.cpp)

│

GPU / CPU

│

Qwen3.5-9B Model

Characteristics:

- Self hosted

- No token costs

- Full infrastructure control

This architecture is particularly attractive for DevOps and platform engineering teams.

Cost Comparison

Running AI models locally can significantly reduce cost.

| Scenario | GPT API | Qwen Local |

|---|---|---|

| 1M tokens | $5 – $30 | ~$0.50 electricity |

| Privacy | External cloud | Local |

| Infrastructure | None | GPU required |

| Customization | Limited | Full |

For startups and enterprises, this means AI becomes financially sustainable.

Real-World Use Cases

Qwen3.5-9B can power a wide range of applications.

Developer Tools

- Local coding assistants

- CI/CD AI copilots

- DevOps automation

Enterprise Applications

- Private knowledge assistants

- Document intelligence

- customer support automation

AI Infrastructure

- Local AI inference clusters

- on-premise AI deployments

- edge AI systems

Why the Industry Is Moving Toward Smaller Models

For years, AI progress was driven by bigger models and more compute.

But the industry is now focusing on efficiency and optimization.

Key trends include:

Architecture Innovation

New techniques like:

- Mixture of Experts (MoE)

- Efficient attention

- advanced training strategies

Local AI Deployment

Running AI models locally offers:

- lower cost

- better privacy

- faster inference

Edge AI

Smaller models enable AI to run on:

- mobile devices

- IoT systems

- laptops

What This Means for DevOps and Infrastructure Engineers

For engineers building platforms and cloud systems, Qwen-style models unlock new possibilities.

Instead of relying entirely on external APIs, teams can deploy local AI infrastructure.

Example stack:

Application

│

API Gateway

│

Inference Engine (vLLM)

│

Qwen Model

│

GPU Server

This architecture enables:

- private AI systems

- offline inference

- lower operational cost

The Future of AI: Efficiency Over Scale

Qwen3.5-9B signals a broader shift in the AI industry.

Instead of massive trillion-parameter models, the future may focus on:

- smarter architectures

- efficient training methods

- deployable AI systems

In other words, smaller models that are smarter, cheaper, and easier to run.

Final Thoughts

Alibaba’s Qwen3.5-9B is an important milestone in the evolution of open-source AI.

By demonstrating that a 9B-parameter model can compete with models more than ten times its size, it challenges the long-standing assumption that bigger models are always better.

For developers, startups, and infrastructure teams, this means:

- AI can run locally

- costs can be drastically reduced

- innovation becomes faster

The next wave of AI may not be defined by the largest models, but by the most efficient ones.

Leave a Reply